On Jan. 30, some Frederick area residents received a notification that firefighters were battling a “commercial blaze” downtown.

A screenshot of the alert was shared to Facebook, sparking concern that was quickly dispelled by one commenter who wrote: “I’m sitting in an office in that building and there is nothing going on.”

An emergency notification app that uses artificial intelligence had misinterpreted radio traffic from a training exercise that simulated a structure fire in downtown, according to a post from the Frederick-Firestone Fire Protection District.

“This incident is a good reminder of the importance of verifying information through multiple reliable sources before sharing or acting on it,” the post reads.

Summer Campos, a spokesperson for the district, said she wasn’t sure how the app had access to the channel firefighters were using. In the future, the district will be using a tactical channel that doesn’t air publicly, she said.

Campos couldn’t confirm what app had alerted residents to the false situation. But CrimeRadar, an app that uses AI to summarize publicly available dispatch audio, had a post that described a fire in downtown Frederick.

Such false alerts are not unique to Frederick. In Boulder and Longmont, AI-driven emergency notifications have spread false information that, in some instances, has sparked very real concern.

Incorrect alerts

On Wednesday, the CrimeRadar sent out an alert reporting an apartment fire in Longmont. Linked dispatch audio didn’t include a location, and Rogelio Mares, a spokesperson for the city, said the city hadn’t received reports of any apartment fires. The post later disappeared from CrimeRadar.

CrimeRadar monitors publicly available dispatch audio and then uses AI to generate summaries, according to its website. Users access alerts on the CrimeRadar website or by downloading the CrimeRadar app, which also sends push alerts.

While Longmont police radio transmissions are encrypted, Longmont Fire radio traffic is aired, Mares said.

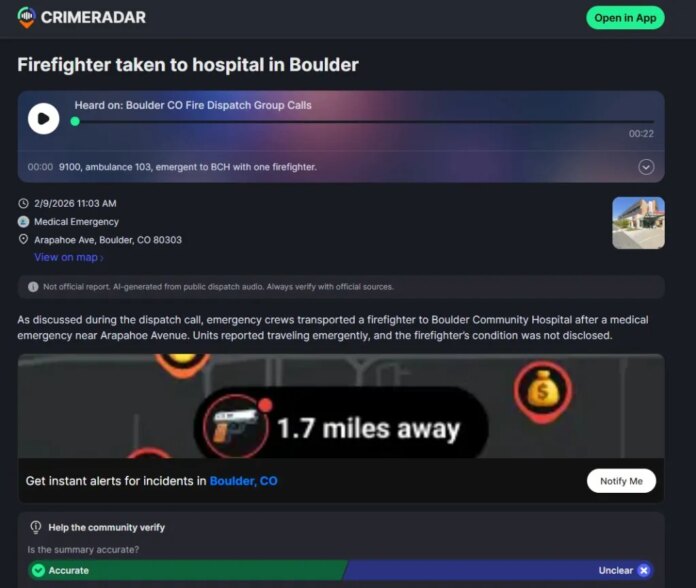

In Boulder, the app recently reported that a firefighter was taken to the hospital after a medical emergency in Boulder. The summary said “the firefighter’s condition was not disclosed.”

No Boulder firefighters were injured that day, according to Jamie Barker, a Boulder Fire-Rescue spokesperson. The app appeared to mistake “fire rider” — a term commonly used by dispatchers to describe firefighters riding in ambulances to provide care to patients — for “firefighter.”

“It took information that it heard incorrectly, and then it summarized it incorrectly, and then also made an assumption,” Barker said.

On Wednesday, the app posted about 60 reports of incidents in the Longmont area, including summaries that accurately summarized linked dispatch audio describing medical emergencies and a reported assault. When a fire sparked in the Colo. 119 median east of Longmont on Wednesday, a CrimeRadar post accurately described the situation.

When apps interpret dispatch audio correctly, there is still a chance the information could be inaccurate or incomplete, as dispatch communications can be incomplete and unverified if crews are not yet on scene, Barker said.

“The scanner can be an exceptional tool and resource, but the scanner also only ever tells half of the story,” Barker said.

Such false alerts can be harmful, especially if the company in charge of the system puts it forth as accurate, according to Casey Fiesler, a professor of information science at the University of Colorado Boulder who researches AI ethics.

“If someone gets an alert saying that there’s a fire, that’s going to be very upsetting,” she said.

“People often think that machines are less biased or more accurate than humans, so for this reason, I just think it’s really, really important that systems like this have very strong disclaimers about how information might not be accurate,” Fiesler added.

CrimeRadar said in a statement it is “constantly improving” its system to “ensure higher precision.”

When a user clicks into a CrimeRadar alert to see more details, a disclaimer is posted above the AI-generated summary that reads, “Not official report. AI-generated from public dispatch audio. Verify with official sources.”

“Our goal is to make communities safer by making emergency information accessible, which is why our disclaimer has been a core feature since day one,” the CrimeRadar team wrote in the statement. “It serves as a constant reminder to users that dispatch calls are unconfirmed and to always rely on official sources for final confirmation.”

Misinformation spreads quickly

Nextdoor, which uses the AI-powered Samdesk to generate alerts, has also sent out incorrect information to Boulder County communities.

Last fall, a Nextdoor alert reporting an active shooter at a federal facility in Boulder quickly generated concern. A screenshot was shared to Reddit, Boulder dispatch began getting several calls from concerned community members, and police were scrambling to get accurate information, Dionne Waugh, a Boulder police spokesperson, said.

The Nextdoor alert turned out to be false. The AI had scraped information from an old online post, Waugh said.

The panic generated by these false alerts can be particularly harmful in Boulder, which has seen large-scale tragedies in recent years, including the 2021 King Soopers shooting and, just this summer, a targeted attack on Pearl Street.

“Every time something like that happens, it takes people back to the real moments,” Waugh said.

“We understand how distressing a false alert can be for residents, and we regret the concern these incidents caused,” a Nextdoor spokesperson wrote in a statement.

The company worked directly with both Boulder and Longmont police after the departments expressed concerns in late 2025.

Nextdoor alerts are processed through a review system and “augmented by human oversight as needed,” according to the statement.

The app has recently added additional verification layers for alerts that involve incidents like mass shootings and wildfires, and given local public agencies access to an alerts map so “boots on the ground” experts can flag or correct inaccuracies within their jurisdictions.

Nextdoor and CrimeRadar said they remove posts when inaccuracies are identified.

Waugh said removing posts doesn’t address the issue because it doesn’t clarify whether something actually happened.

Fiesler agreed. Correcting misinformation once it spreads can be extremely difficult, she said, as misinformation spreads more quickly than corrections.

“Even if the first person posts a correction, that’s not going to make its way to everyone that saw it,” she said.

Where to verify information

Police and fire departments have urged community members using CrimeRadar, Nextdoor or similar services to verify information they see on these platforms.

“It could be useful, it could be another mode of getting information out quickly, but you have to know that you have to verify it elsewhere,” Fiesler said.

Waugh cautioned community members against relying on these services at all.

“Given the inaccuracy of these companies, I wouldn’t rely on them,” she said.

Instead, community members should get their information directly from the police department or from traditional media outlets, Waugh said.

“The most accurate and best information is always going to come from your local law enforcement or your local fire department,” Barker said. “It’s nice to try and find a shortcut to that information through AI, but if you really want to know 100% sure that what you’re hearing is true and real, then you gotta go to the departments for that.”